Radio City made headlines this holiday season, not for the Rockettes but for its alleged use of facial recognition to refuse entry to a lawyer attempting to visit with her child. But privacy and technology experts say this type of biometric screening can have much more serious consequences. [Transcript: Center for Democracy & Technology | Transcript: Coded Bias]

4 takeaways

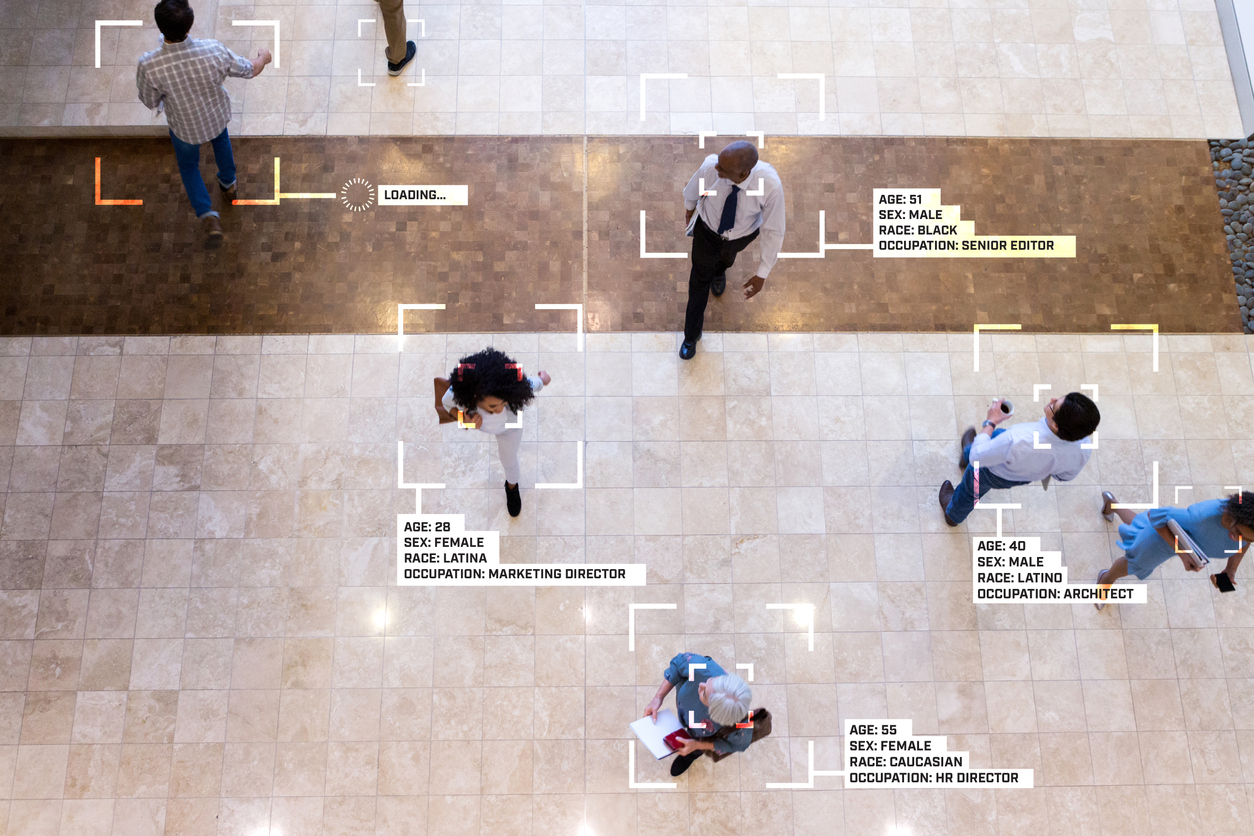

➀ Facial recognition is like the “wild West.” Facial recognition technology is intended to verify someone’s identification or identify someone based on image or video footage, yet civic groups point out that it is imperfect and particularly inaccurate for people of color.

“What was so chilling to me … was that this racially biased technology was not being beta tested on a shelf somewhere at MIT, this was technology that was being sold to the FBI. It was actively being sold to ICE … to police departments across the country and used often in secret with no one that we elected, no one that represents ‘we, the people’ giving any kind of government oversight,” said Shalini Kantayya, the documentary filmmaker behind “Coded Bias.” [Video: Shalini Kantayya on Coded Bias]

There are not many laws on it, said Center for Democracy & Technology President Alexandra Reeve Givens, though areas with a greater understanding of these technologies have been among the first to ban them, such as San Francisco and Cambridge, Mass., (home to Harvard and MIT). State-by-state measures can be found on the Center for Democracy & Technology website.

“Even if this tool were the most accurate thing in the world … we need to worry about the fundamental use of this technology as a threat to freedom, to people’s right to go out into open spaces and convene with others without fear of who might be tracking and cataloging them as they do so,” Reeve Givens said. [Video: Alexandra Reeve Givens & Jake Laperruque]

➁ Law enforcement use of facial recognition is already widespread. “As far as we know, at least one in four police departments have the capacity to use facial recognition. At least half of all federal law enforcement agencies with law enforcement capabilities and roles use facial recognition,” said Jake Laperruque of the Center for Democracy & Technology. “The FBI, which is probably the biggest federal user of the technology, they run thousands of facial recognition scans every single month, sometimes for their own investigations, sometimes to assist state and local law enforcement.”

There have been multiple instances of wrongful arrests due to facial recognition technology. For example, Robert Williams was wrongfully arrested and falsely identified as a shoplifting suspect by the Detroit police department’s facial recognition software.

Facial recognition is “very much like a fingerprint or a genetic swab, both of which require a police warrant to obtain,” Kantayya said. However, with facial recognition, you often don’t know it’s happening.

Laperruque recommends submitting a FOIA request to find out what your local police use. In 2016, Georgetown’s Center on Privacy & Technology published The Perpetual Line-Up. They did a state-by-state series of FOIA requests to law enforcement to find what their face recognition technology was, where they were sourcing their information from and who was sharing photos for purposes of these matching databases, Reeve Givens said.

➂ What does facial recognition technology have to do with democracy? In China, facial recognition has been used to profile the Uyghur population, in Iran, it will be used to identify women who don’t wear a hijab, and in the United States it’s been used at protests following the death of Freddie Gray, Reeve Givens said.

“Facial recognition is one of those tools that really is a qualitative changer for – if we don’t put legal limits on it – what the government can know about you, what you’re doing, who is a dissident, who is a protestor, in ways that truly are not compatible with democracy if we do not have proper limits on them,” Laperruque said.

Kantayya expressed similar concerns about facial recognition and other forms of artificial intelligence.

“You have democracies essentially picking up the tools of authoritarian states with no democratic rules in place to govern its use,” Kantayya said. “I really began to understand that everything that I love and value as a free person living in a democracy, be it free speech or fair and accurate elections or equal opportunities in employment, or that communities of color shouldn’t receive undue police scrutiny, that everything I hold dear is being radically transformed and rapidly transformed by algorithmic systems.”

➃ Is there a right or wrong way to use facial recognition? How do you feel about facial recognition used to identify people in the crowd at the Capitol on Jan. 6 versus being used at racial justice protests? As advocates, Reeve Givens said they strive for consistency “and warn that when a technology is dangerous, the technology is dangerous, even if there are sympathetic fact patterns where one might empathize with the goals of law enforcement.”

While Kantayya said there are “endless good uses” for technology and that AI could be used for social good, “there’s no economic model at the moment that would promote this most powerful technology for public good … as long as we have this model where companies are extracting our data, no economic benefit to us, with us having no recourse or rights to our data, I think that it is a recipe for exploitation.”

In addition to serving as a watchdog for government, law enforcement and corporate uses of these technologies, Kantayya said journalists must help to improve public literacy around these issues.

This program was sponsored by Arnold Ventures and Medtronic. NPF is solely responsible for the content.